The happiest countries and findings from the World Happiness Report 2026

Finland, Iceland, Denmark lead the 2026 ranking. Full list of 147 countries, key findings on social media and wellbeing, and how your donations create happiness.

Co-founder and director of Mieux Donner

Reading time: 30 min.

It would seem that in France, impact measurement has become the new norm for every charity project. Specialist consultancies are springing up everywhere, charities are appointing “impact managers” and endowment funds are proudly displaying their determination to improve the impact of their funding and calling themselves “impact funds”. This proliferation gives the impression of a real boom in the way we assess the real impact of our actions. However, behind this apparent effervescence lies a much more nuanced, even alarming reality: much of what is sold under the term “impact measurement” lacks rigour, and may even be no more than a communication tool.

For some people, measuring impact is a way of checking that the action taken is producing certain intermediate effects or is part of a continuous improvement process. But this misses the essential point: helping the beneficiaries. To do this, we need to look at the ultimate impact on the target audience and use clear, objective and comparable measures. It’s not just a question of identifying areas for improvement, but also – and above all – of being able to close down programmes that don’t show any social impact and direct resources towards those that help more.

It is important to emphasise that the main victims of these practices are people in need. In fact, when evaluations do not allow interventions to be compared objectively, it becomes impossible to allocate resources in such a way as to genuinely help the most disadvantaged.

There are several reasons for this situation in France:

In this article, we propose to take an in-depth look at current impact measurement practices in France, comparing them with rigorous methods applied internationally. We will analyse the differences, their consequences for beneficiaries – often the most disadvantaged – and propose avenues for reform so that impact measurement can truly serve to direct funding towards the most effective interventions.

Today, you might think there’s a real boom in impact measurement in France. Specialist consultancies are springing up all over the place, and associations, under pressure from funders and the general public, are appointing “impact managers” to prove that they are making a difference. There are now networks of hundreds of impact measurement and organisational practitioners.

However, this proliferation of initiatives masks a much cruder reality: most of the methods used are based on qualitative evaluations, often tailor-made, which put forward vague indicators – such as the percentage of donations going directly to beneficiaries or the number of people “helped” – without really measuring the real cost per unit of impact.

One of the major shortcomings of this approach is that impact measurement is often used to justify existing funding rather than to guide new decisions. The idea of avoided cost to society (SROI – Social Return on Investment) could be a significant step forward. In theory, it would make it possible to compare different interventions and direct funding towards those that offer the best social return. In practice, however, this concept is used too much with disparate methods on an ad hoc basis. Rather than providing an objective comparison, the SROI is mainly used to legitimise a project that is already in place, without ever questioning whether there is a more effective alternative.

Another problem with this system is that the results of the evaluations remain largely confidential. There is virtually no sharing between organisations, which makes comparative analysis impossible. Each association funds its own isolated study, the conclusion of which generally boils down to “very high impact, keep doing what you’re doing”, without ever recommending that funds be reallocated to more effective projects. This vicious circle leads to a status quo where everyone continues to act “as they already know how”, without really taking into account the needs of beneficiaries or the relative performance of interventions.

Monitoring and evaluation (M&E) is an essential process for any organisation seeking to structure its action and ensure the transparency of its activities. Monitoring involves collecting data on the implementation of a programme (e.g. number of beneficiaries reached, volume of activities carried out), while evaluation aims to analyse the effects of these actions and draw lessons from them. The effects analysed do not always reflect the final impact sought, but often intermediate indicators that can be observed in the field. These two stages are an essential basis for steering a project, guaranteeing its operational effectiveness and optimising the resources allocated.

Many organisations focus solely on monitoring the activities implemented (number of beneficiaries reached, quantity of services provided) without asking themselves whether these interventions have really improved the situation of the beneficiaries.

Let’s take a fictitious case study:

A foundation funds a programme to distribute free books to disadvantaged children. The evaluation shows that 5,000 books were purchased, 99% were delivered, and many children now have them in their bedrooms. On this basis, the project is considered a success.

Rather than focusing on the number of books distributed, an analysis of the effectiveness of the intervention would ask key questions: has this initiative really improved literacy rates? To what extent, and compared with other available options?

It may be that promoting positive messages to parents increases school attendance and reading achievement more, and at a lower cost than distributing books. With this in mind, if the aim is to improve education in the most effective way possible, resources should be reallocated accordingly.

However, it is important to note that monitoring and evaluation remain essential to ensure real impact: for example, by measuring the number of treatments administered and taken to assess their effectiveness. The impact-based approach does not replace the monitoring of activities, but integrates it into a broader reflection on how best to achieve the objectives set.

Against Malaria Foundation (AMF), which distributes insecticide-treated mosquito nets to combat malaria, is often cited as a model of rigorous monitoring and evaluation (M&E) and measurable impact. Every donation is tracked and every stage of the intervention scrutinised. AMF publishes detailed distribution data online, with maps, photos and monitoring reports. What makes AMF exceptional is not its M&E per se, but that the organisation uses its capacities for interventions that have proven impacts on health and the fight against poverty. It is recognised by GiveWell, an independent evaluator, as one of the most effective associations in the world. |

Internationally, evaluators use standardised indicators such as DALYs, LAYS and WELBYs to objectively measure the impact of an intervention, enabling direct comparison between very different projects:

In France, it has to be said that the majority of evaluators are content to create bespoke indicators for each project. This approach, although personalised, prevents any comparison between interventions, making it impossible to prioritise actions rationally. As a result, it becomes difficult for funders to know where their funds could have a much greater impact.

Another major pitfall is the almost systematic absence of counterfactual reasoning in French evaluations. To measure the real impact, it is essential to compare what happened with and without the intervention. For example, an association that distributes school supplies might seem very effective, but without analysing what would have happened in the absence of this distribution, there is a risk of overestimating its impact, for example if people already had supplies or if they had planned to buy them. This gap makes it impossible to identify the real gaps and direct funding towards the most effective interventions.

In France, impact measurement is mainly based on qualitative assessments, such as satisfaction surveys and illustrative case studies. These approaches often focus on the “good stories” and positive perceptions of beneficiaries, without ever quantifying the impact in terms of comparable units. This methodological choice, which favours validation of the project rather than comparative analysis, leads to a lack of scientific rigour and prevents a more informed allocation of resources.

We are highlighting quantitative approaches because they can be used in more cases than we think, but it is possible to prioritise beyond numbers.

The best charities are distinguished by their ability to rely on robust scientific evidence. In particular, they use randomised controlled trials (RCTs) and meta-analyses to demonstrate the effectiveness of their actions. These practices make it possible to accurately measure the cost per life saved or per DALY avoided. Unfortunately, very few evaluators in France adopt these rigorous methods. The majority of evaluations are based on assessments without a scientific method, which limits the ability to objectively compare the impact of interventions and prioritise funding accordingly.

Impact assessment in France suffers from excessive fragmentation, with each organisation conducting its own studies without a common framework. This multiplication of evaluations leads to additional costs, which could be better invested in the interventions themselves. What’s more, the lack of standardisation prevents any rigorous comparison between projects, making it difficult to identify the most effective actions. Studies show that it is often possible to help 100 times more people for the same cause with the same budget, but these opportunities remain largely unexplored for lack of a truly comparative evaluation system.

This problem is compounded by the limited dissemination of results. Unlike evaluators such as GiveWell, who publish their analyses in full to enable criticism and comparison, French firms generally keep their studies confidential. This lack of transparency prevents the pooling of knowledge and limits the ability of funders to effectively redirect their funding towards the most cost-effective interventions. As a result, evaluations are more often used to justify existing funding than to question current choices and optimise the impact of allocated resources.

Contrary to popular belief in France, assessing the impact of a project does not require an exorbitant budget or years of bespoke research by external consultants. Tools exist, databases are accessible, and rigorous methods are already in use elsewhere. Here are a few best practices for rapidly assessing the impact of an intervention without wasting time and resources.

Before funding a costly impact assessment, an obvious question needs to be answered: has anyone assessed a similar project elsewhere?

French consultancies seem to be unaware that much of the evaluation work has already been done internationally. Education, anti-poverty and public health projects have already been evaluated using robust methodologies. It is often enough to do a search in English to find usable results, but this simple reflex is too often neglected – resulting in the funding of projects that do little or nothing to help the beneficiaries.

Search in academic databases : Google Scholar, ResearchGat or SSRN group together thousands of free access publications.

Charity Entrepreneurship propose un processus avec une première évaluation en dix minutes, avec quelques colonnes et des notes en fonction de critères pertinents, pour obtenir un ordre de grandeur. Avec une feuille de calcul un peu plus détaillée, une pré-évaluation peut être affinée en une heure ou deux, permettant ainsi aux décideurs de mieux orienter les financements. Investir ce temps en amont est un moyen simple d’éviter des coûts élevés sur le long terme en assurant que les ressources sont utilisées efficacement.

Les publications académiques et les analyses d’organisations spécialisées sont une mine d’or pour quiconque veut comprendre l’impact réel d’une intervention. Pourtant, elles sont très peu exploitées par les acteurs français de la mesure d’impact.

Certaines ressources sont pourtant particulièrement précieuses pour identifier des projets à haut impact :

Chercher dans les bases de données académiques : Google Scholar, ResearchGate ou SSRN regroupent des milliers de publications en accès libre.

Independent evaluators and research organisations

In many cases, a simple critical reading of these resources would enable us to make much more informed decisions – and at lower cost.

Effective altruism is an approach that combines empathy and rationality to identify interventions with the greatest positive impact. Unlike traditional approaches, this community adopts a rigorous scientific method to direct resources towards the most effective solutions.

The Effective Altruism forum offers a wealth of resources: thousands of posts on the best evaluation and prioritisation methods, debates on the criteria to use and best practice.

Why is this community so valuable in helping us direct our resources more effectively?

Here are a few organisations that respect our selection principles and are considered to have an impact because they make the best use of funds to help others:

Helen Keller International: This charity offers life-saving vitamin A supplementation programmes.

New Incentives: This charity works to increase child vaccination rates.

Good Food Institute: This association promotes research into alternative proteins, defending a diet free from animal suffering and more respectful of the planet.

In France, evaluation is not generally carried out with altruism in mind, or with the primary aim of helping as effectively as possible. It is not an impartial approach that seeks to identify the best ways to help, but often an administrative requirement to obtain funding.

At Mieux Donner, evaluation is not about producing figures to justify funding or fill in reports, but about multiplying our impact tenfold. Each donation can transform lives, and rigorous analysis of charities enables us to identify those that do the most good. Ignoring this reality would mean agreeing to help less when we could be helping more. Every action counts, and it is by putting efficiency at the heart of our decisions that we can really make a difference. We hope that more and more players will join us in this approach.

Non-profit organisations need funds to continue to exist and carry out their projects. But asking a charity to rigorously evaluate its effectiveness is, in some cases, tantamount to asking it to prove that its project is not working or that another project would have had a greater impact.

The paradox of incentives:

A culture of ‘doing’ rather than ‘comparing’

We’ll see in the next section that prioritising and seeking to be among the most efficient can be an excellent way of finding funding.

The impact measurement market in France is largely based on consultancies that sell studies to charities and funders. However, their business model favours bespoke evaluations, making it impossible to compare projects. Rather than adopting standardised methodologies, these firms each develop their own analysis tools, making the results opaque and difficult to compare.

This lack of standardisation maintains a system where funding decisions are not based on objective cost-effectiveness criteria, but on reports produced in a self-validating manner. Without a common evaluation framework and systematic data sharing, it is impossible to clearly identify which interventions should be prioritised.

This misalignment of interests has a direct consequence: resources are dispersed inefficiently, to the detriment of beneficiaries who could be better helped if funds were allocated to interventions with the greatest impact.

Why would standardisation call this business model into question?

Yet a new business model is conceivable. Some firms could set themselves apart by adopting rigorous and transparent methodologies, based on comparative and standardised evaluations. If these analyses really helped to guide decisions and arbitration, they would become a real tool for foundations and philanthropists.

By requiring this type of evaluation, these funders would encourage the entire sector to move towards higher standards. This would create a virtuous circle in which charities, mindful of their impact, would adopt the most effective methods, thereby ensuring that the resources allocated actually benefit those in need.

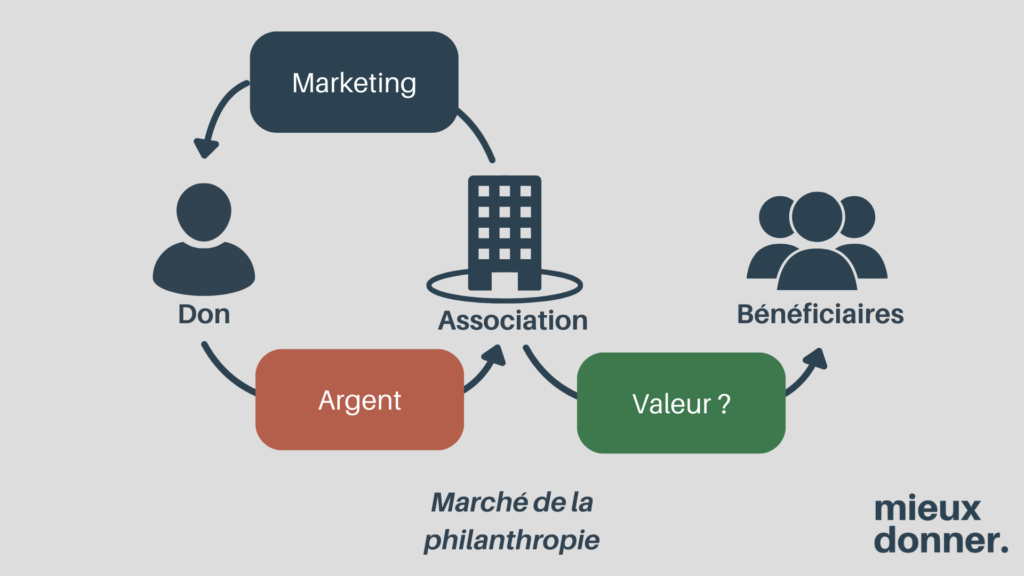

The money donated to the associations does not come directly from the beneficiaries, but from individuals, foundations or public institutions. These funders have different motivations and constraints to those of the people receiving the aid.

Why don’t funders push for real impact measurement?

The risks of a lack of accountability

However, a growing movement in favour of more effective generosity is developing in France. By taking part, it is possible to direct resources towards actions that transform the most lives and to strengthen a culture of impact at the service of beneficiaries.

In a market where non-profit organisations must first and foremost secure their funding, impact measurement is becoming a validation tool rather than a means of prioritisation.

True impact measurement should make it possible to compare interventions and redirect resources to those that have the greatest effect. Yet this approach is virtually absent in France.

Funders do not require standardised indicators to enable projects to be compared objectively. They are content to see an “impact assessment” line in grant applications, without trying to understand whether these assessments really enable funds to be better allocated.

This lack of a selection mechanism is one of the reasons why it is possible to have efficiency differences of up to 100 times between two projects in the same case, without these differences being visible to the funders.

As it stands, the French impact measurement system is not fulfilling its role. It produces costly reports that do not enable better decisions to be taken and that justify funding without ever questioning the allocation of resources.

As a result, resources are not allocated to interventions that could really multiply the impact on beneficiaries. This system, designed to protect existing interests, does not serve the funders, and certainly not the people who should benefit from these interventions.

Despite the existence of rigorous tools and methodologies that are widely used abroad, almost no French players seem to be familiar with them or to be incorporating them into their practices. GiveWell, the world’s leading charity evaluator, nevertheless freely shares its analyses and tools, and appears among the first search results with terms such as “cost effectiveness charity” or “charity evaluator“.

Yet it is possible to talk to dozens of people in the sector without a single one of them even knowing GiveWell by name. Worse still, the majority of players specialising in impact measurement rely neither on the scientific literature (white literature) nor on analyses produced by organisations such as Founders Pledge (grey literature), even though a simple search would sometimes reveal that some of the interventions they fund or evaluate have not proved to have a positive impact.

This lack of rigour is mirrored in the academic world: you can get a master’s degree in humanitarian aid having spent just a few hours on evidence-based approaches and without having learnt how to evaluate a project using a few key criteria. You get your degree without having heard of GiveWell, whereas if you’re studying to help the most disadvantaged, discovering the most effective interventions in the world would seem to me to be a good starting point.

France’s philanthropic sector has few links with the academic and scientific world, particularly when it comes to development economics. Unlike in the United States or the United Kingdom, where think tanks and universities work hand in hand with NGOs, in France these two worlds remain largely separate.

Many French associations continue to favour qualitative and narrative approaches rather than quantitative impact measurements. The idea that scientific evaluation might challenge established practices is often perceived as a threat rather than an opportunity.

How do you explain this difference with the Anglo-Saxon world? For a long time, France was characterised by a model in which the State played a central role in redistribution and the funding of social services. Historically, the major advances in solidarity (social security, family allowances, public health) have been steered by the State and not by private initiatives.

This centralisation has led to a persistent mistrust of private players, particularly philanthropists, who are seen as competing alternatives to public policy. As a result, philanthropy in France has often been limited to a complementary role, focused on humanitarian or social actions with no claim to measurable effectiveness.

Conversely, in countries such as the United States and the United Kingdom, the philanthropic tradition is based more on a decentralised and entrepreneurial approach. The state has historically played a more limited role in social aid, which has given way to a more structured philanthropic sector. In these contexts, impact measurement is rapidly emerging as a key tool for directing funding towards the most effective actions.

Of course, we don’t need to adopt the Anglo-Saxon model in order to benefit from these discoveries: we can perfectly well combine a central State with an impacting, abundant and rigorous social aid system, fuelled by committed public research. This does not replace the role of the State, but it does allow us to act lucidly within the framework of existing projects. It would be naïve to believe that the free market can do everything. It is no substitute for public policy, tax redistribution or the regulation of structural inequalities.

In France, giving is often perceived as an act of solidarity rather than an action to help as best we can. The dominant discourse in philanthropy emphasises personal commitment, support for local causes and the importance of “doing one’s bit”, without necessarily questioning the real impact of the funds raised. Philanthropy is centred around the people who give and not around the people in need.

This approach is reflected in the behaviour of French donors, who often favour well-established associations that are known to the general public, without seeking to compare the effectiveness of their actions with those of other organisations. In the absence of a strong demand for rigorous evaluations, the associations themselves have little incentive to adopt measures of transparency and cost-benefit performance.

In countries where impact measurement is used to identify the best opportunities for donations, organisations have every interest in demonstrating that they are more effective than their competitors at attracting funds. In the United States, GiveWell, Founders Pledge and other independent evaluators were born of this dynamic: faced with a multitude of associations seeking to capture the attention of major donors, the organisations that were most rigorous in evaluating their impact were able to stand out.

In France, this approach is virtually non-existent. The big charities benefit from strong institutional recognition and recurrent funding, without having to prove that they are effective. Private generosity and public funding often favour an organisation’s notoriety and seniority over an analysis of its real impact, which perpetuates the status quo.

France has produced one of the most influential figures in impact evaluation in Esther Duflo, co-winner of the Nobel Prize in Economics in 2019 with Abhijit Banerjee and Michael Kremer. Her work has revolutionised the way in which development policies are evaluated, by highlighting the importance of randomised controlled trials (RCTs) to rigorously measure the impact of interventions. Yet his influence is far more marked abroad than in France.

While Esther Duflo’s research has been widely adopted by international organisations, governments and NGOs around the world, it remains surprisingly little used in the French voluntary sector. Few of the major foundations or institutional players incorporate the approaches she has defended, and even fewer associations apply the methodologies she has popularised.

What is even more striking is that some of the biggest players in philanthropy in France continue to claim that it is impossible to measure the impact of certain interventions, particularly in education. Yet more than 30 years ago, Michael Kremer – co-winner of the Nobel Prize with Duflo – published studies demonstrating that it was possible to rigorously evaluate the effectiveness of educational programmes by comparing different interventions. Dozens of randomised controlled trials have since confirmed these results, and organisations such as J-PAL (co-founded by Duflo) have developed databases on the subject.

It is essential to adopt internationally recognised indicators to measure impact objectively. The systematic use of metrics such as DALYs, LAYS, WELBYs or avoided tCO₂e would make it possible to compare very diverse interventions on a common scale. By dividing the results by the total cost of the intervention, it would be possible to obtain a cost-effectiveness ratio that would guide trade-offs between different projects.

To avoid overestimating the impact, a counterfactual analysis should be systematically incorporated into evaluations. This approach consists of comparing the results obtained thanks to the intervention with those that would have occurred in its absence. This makes it possible to measure the real net impact and to identify the interventions that genuinely generate an additional positive effect.

The publication and centralisation of evaluation results is essential to enable comparative analysis between projects. Open sharing of data would strengthen funders’ ability to identify the most effective interventions and to direct funding accordingly. A common database, accessible to all stakeholders, would help to improve understanding of the real impact of different initiatives.

Individuals and funders have a key role to play in the adoption of true impact measurement. Rather than funding projects based on vague or emotional criteria, they must demand rigorous proof of effectiveness. This means favouring organisations that publish cost-effectiveness analyses, incorporate counterfactual reasoning and use standardised indicators. By directing funding towards the most effective interventions, they can increase their impact tenfold and contribute to a real transformation of the voluntary sector.

Prioritising means looking each programme in the face: what is its cost per beneficiary? Is there an alternative that is ten or even a hundred times more effective? Closing or downsizing an underperforming project is not an admission of failure; it is an act of loyalty to its raison d’être. Organisations that dare to do this gain tremendous leverage: with the same budget, they reach more priority regions, more people and save more lives. They also become trailblazers: by demonstrating that a rigorous arbitration culture is possible, they inspire the entire sector to aim higher.

I’ve also had several cases where associations, by starting to talk in terms of cost-effectiveness and comparing themselves with other opportunities, have seen their financial support increase. Even more surprisingly, some associations decided to close their main programme after a rigorous evaluation, because it was not the best use of their resources. The people who supported them were all the more motivated to support them, because they could see that their approach was sincere.

In a market where funders scrutinise the added value of every euro, displaying a transparent prioritisation strategy is a powerful differentiator: it’s the promise, backed up by figures, that every donation generates a real impact. Foundations and associations that adopt this approach find it easier to attract new partners – up to several million additional euros a year – and benefit from rare media coverage: “the first French foundation to close a popular programme to fund one that is a hundred times more effective” would be a memorable story.

Mieux Donner provides a dedicated team to guide each structure, whatever its size: rapid portfolio audit, selection of appropriate international indicators (DALY, tCO₂e, WELBY, etc.) and construction of a ready-to-use prioritisation table. We can train your teams in the basics of counterfactual reasoning, share open reporting templates, then track progress and all without charging a penny, because our funding is already secured and our only mission is to help.

This support is also aimed at consulting firms looking to differentiate themselves: adopting a standardised and transparent methodology is the surest way to offer clients measurable value. Joining this dynamic means gaining access to an international network of experts and peer reviews. Together, we can make prioritisation the norm and prove that efficiency and credibility are two sides of the same coin.

Impact measurement in France, as currently practised, appears to be an illusion that diverts resources and maintains an ineffective status quo, to the detriment of the most disadvantaged. Despite the existence of comparable tools and rigorous methodologies – such as cost-benefit analysis incorporating counterfactual reasoning and the use of standardised indicators such as DALY, LAYS, WELBY or avoided tCO₂e – their adoption remains marginal in the French non-profit sector.

The tailor-made evaluations carried out by numerous consulting firms, whose business model is based on the absence of standardisation and the lack of dissemination of results, prevent objective comparisons between projects. This system, which favours the justification of existing funding rather than the targeting of more effective interventions, generates administrative costs and limits the ability of funders to arbitrate between initiatives that may be very different in terms of social return.

Furthermore, the lack of systematic sharing of evaluations and ignorance of proven approaches abroad – despite the availability of robust studies and rich international resources such as those implemented by GiveWell or Founders Pledge – prevent the effective rethinking and reallocation of available funds.

It is therefore imperative to transform impact measurement in France so that it becomes a genuine decision-making tool, focused on rigorously assessing the real effectiveness of interventions. To achieve this, standardised and comparable methodologies should be adopted, counterfactual analyses should be systematically integrated, and the publication and sharing of evaluation results should be encouraged. Such an approach will not only reduce the fragmentation caused by the proliferation of tailor-made evaluations, but also better target funding towards projects capable of generating real change for beneficiaries.

Actors wishing to help, whether public or private, must therefore be encouraged to demand rigorous and transparent evaluations. By targeting their resources on the basis of comparable indicators and scientifically validated methods, they can contribute to a more relevant reallocation of funds, so that every euro invested really serves to improve the lives of the most disadvantaged. It is time to rethink the impact measurement sector in France in order to transform what is currently a tool for justification into a powerful lever for directing aid towards interventions that really make a difference.

Finland, Iceland, Denmark lead the 2026 ranking. Full list of 147 countries, key findings on social media and wellbeing, and how your donations create happiness.

Human life is precious. It is natural to want to mobilise all our resources to save a life, even if it only prolongs a life by a week. But what happens when other people are also in danger, and our resources are not enough to help them all? As a society, we face practical limits that force us to make difficult decisions.

It’s easy to feel discouraged by the dramatic retreat of glaciers in the Alps and the scale of climate change can easily leave us feeling powerless. This article will equip you with the knowledge to take meaningful climate action, in both your personal life and through your charitable donations.

€8,920 collected through Mieux Donner, €746,000 in total with Effektiv Spenden: here is how the Safeguarding the Future Fund allocated its H2 2025 donations across three projects in nuclear security and AI safety.

The Smart Buys Alliance brings together government agencies, research centres and philanthropic organisations to produce and disseminate lists of the most cost-effective development interventions. An overview of the initiative, its methodology and its key areas of focus.

Is deciding from London or Paris who needs help and how a form of colonialism? This article examines what the critique gets right, where it goes wrong, and what effective giving does concretely differently — including its own blind spots.