Artificial Intelligence: the OECD's principles for developing trustworthy AI

Ombline Planes

Director of Communications

Reading time: 5 minutes

Artificial intelligence (AI) is rapidly transforming our societies. It is reshaping education systems, labor markets, healthcare, and even the way we engage in democratic dialogue. But is this technological revolution truly serving the public interest? Can it respect human rights and democratic values? According to the OECD (Organisation for Economic Co-operation and Development), the answer is yes—provided that AI development and deployment are structured around clear, flexible, and evidence-based principles.

In 2019, the OECD created a reference framework grounded in human-centered values to ensure that AI genuinely benefits society. At Mieux Donner, we share this belief. This is why we support the Centre pour la Sécurité de l’IA (CeSIA), a French initiative that raises awareness and equips both citizens and policymakers to address the risks posed by advanced AI. We believe trustworthy AI is possible if its implications are understood, its effects monitored, and resources directed toward the most effective projects.

At Mieux Donner, we share this view. That’s why we support the Centre pour la Sécurité de l’IA (CeSIA), a French initiative dedicated to raising awareness and equipping both the public and policymakers to address the risks associated with advanced AI. We believe that it is possible to develop trustworthy AI—provided we understand its implications, monitor its impacts, and direct funding towards the most effective projects in this field.

The OECD AI principles: a foundation togGuide AI stakeholders

In 2019, the OECD adopted five guiding principles for governing the development of AI systems. These principes — also adopted by the G20—are now a global reference framework. They recommend that all AI should:

- be beneficial to humans and sustainable development,

- respects human rights and democratic values,

- is based on transparency, traceability and explainability,

- be technically robust and secure,

- holds AI stakeholders accountable.

These principles guide the implementation of the OECD AI Code of Conduct and help establish standards for AI that are practical and flexible enough to keep pace with rapid technological change.

Measuring AI capabilities: the OECD approaches

To help member countries track the rapid progress of AI, the OECD created the AI Capability Indicators. These indicators make it possible to:

assess a country’s ability to develop and deploy responsible AI.

understand performance in innovation, governance, and infrastructure.

identify gaps and guide effective AI policies.

You can check these indicators online.

This kind of monitoring is essential. It enables decision-making based on solid datasets rather than reactive choices. At Mieux Donner, this evidence-based approach is central to our mission: we recommend projects that combine scientific rigor, proven effectiveness, and measurable impact.

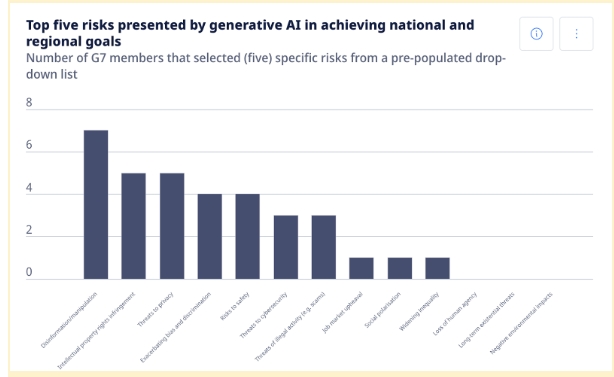

Generative AI: opportunities and security challenges

- Large-scale disinformation.

- Privacy violations.

- Algorithm-driven bias and discrimination.

- Polarization of opinions.

- Difficulty managing risks related to content traceability.

That’s why the OECD encourages algorithmic transparency, accountability,

and standards that guide AI actors towards safer practices.

Jobs, training, and human skills: AI transforms Work

According to the OECD, AI has the potential to profoundly transform labor markets. It automates certain tasks, changes others, and requires new technical and ethical AI skills.

The OECD’s recommendations are clear:

invest in training and retraining.

promote lifelong learning.

support the development of skills needed for the responsible management of AI systems.

AI and education: transforming learning with ethics

The OECD recognizes that AI can personalize teaching, identify students’ difficulties more quickly, and promote inclusion. But it also warns of risks: algorithmic bias, unsafe data use, and unequal access to technology.

To address these challenges, schools and education systems must:

integrate AI ethically.

train teachers in AI tools.

ensure equal access and respect for privacy.

Global governance: a shared responsibility

The OECD acts as a platform for international coordination, in cooperation with the G7, the European Union, UNESCO, and other major institutions. It facilitates exchanges between states, the scientific community, NGOs, and businesses, and promotes mechanisms for shared governance.

Your donations can help to reduce AI risks

Artificial intelligence is one of the most strategic fields for humanity’s future. Efforts to develop responsible AI must be supported by targeted, transparent, and measurable funding.

CeSIA is currently one of the few high-impact French-speaking initiatives in this field. In 2024, the association:

reached over 900,000 people through online awareness campaigns.

trained more than 120 professionals in AI regulation.

helped develop AI safety training modules for leading universities such as ENS Ulm and ENS Paris-Saclay.

CeSIA draws on the latest peer-reviewed research to design its training programs, directly integrating key issues of responsible AI into its modules. Its team works closely with academia, international institutions, and local elected officials to equip them to address the ethical, technical, and social challenges of AI—such as AI system governance, algorithm regulation, civic oversight, and cybersecurity.

Convinced that a well-informed society is better able to demand AI that respects human rights and fundamental freedoms, CeSIA offers hybrid formats and practical workshops in companies and institutions to help implement the OECD’s recommendations on future AI risks.

By building a real bridge between academic research, policymaking, and civil society—and through partnerships with international institutions—CeSIA strengthens human capacities rather than delegating them blindly to technology, while actively promoting the effective policies recommended by the OECD.

These results are made possible by private donations. By supporting CeSIA, you help develop AI that is trustworthy, respects human rights, and strengthens human capabilities rather than replacing them.

What you can do today...

Visit the CeSIA program page:

Explore the OECD.AI platform for tools and publications on AI.

Support Mieux Donner to help structure philanthropic initiatives that have a measurable impact on the future of AI