The happiest countries and findings from the World Happiness Report 2026

Finland, Iceland, Denmark lead the 2026 ranking. Full list of 147 countries, key findings on social media and wellbeing, and how your donations create happiness.

Co-founder and Director of Mieux Donner

Reading time: 17 min.

Cost-effectiveness analysis (CEA) is one of the most powerful tools we have for guiding our donations. They enable us to evaluate and compare different interventions by measuring results against costs.

At Mieux Donner, we rarely produce our own analyses, but select the best available evaluations, carried out by independent organisations such as GiveWell or Giving Green. Our role is to identify the interventions with the greatest measured impact and to make this information accessible.

But while cost-effectiveness analyses are an essential tool, they should not be taken at face value. A naïve reading of the results can lead to mistakes: comparing figures that are not comparable, overlooking uncertainties, or forgetting elements that are difficult to quantify. We use these analyses rigorously, bearing in mind their limitations, and supplement them with other approaches to make informed decisions.

This article explores our approach: how we use cost-effectiveness analyses, why we sometimes simplify our messages to raise awareness, and how we avoid classic mistakes by integrating other evaluation tools.

A cost-effectiveness analysis (or CEA) is a method that compares several possible actions on the basis of their cost and measured impact, without seeking to monetise this impact directly. It is mainly used when the desired effect has no obvious market value: for example, how much does it cost to prevent a premature birth, to restore a year’s sight, or to avoid a tonne of CO2. This type of analysis makes it possible to classify actions according to their relative effectiveness.

Unlike a cost-benefit analysis, the CEA does not try to translate everything into euros. It maintains a degree of humility by clearly distinguishing between costs (expenditure) and effects (concrete results, such as health or schooling). In practical terms, it allows us to answer a simple but decisive question: if I want to achieve such and such an impact, what is the most effective way of achieving it with my limited resources? It can also be used in a variety of areas, including education, health, the environment and development aid.

In poor countries, many people suffer from vitamin A deficiency. This is one of the main causes of avoidable blindness in the world. It begins with a deficiency of this vital nutrient, which affects children, particularly those living in conditions of poor nutrition and limited access to healthcare. If left untreated, a child suffering from vitamin A deficiency will begin to suffer from night blindness and, over time, may go blind.

However, there is a very inexpensive solution that can prevent this disease from developing: providing vitamin A supplements. The question is whether this intervention really works. If, for example, for the same cost, it saves more lives than actions in France where the statistical cost of saving a life is on average 3 million euros. Or even to ask whether it helps more than investing the same amount in another operation in the same country.

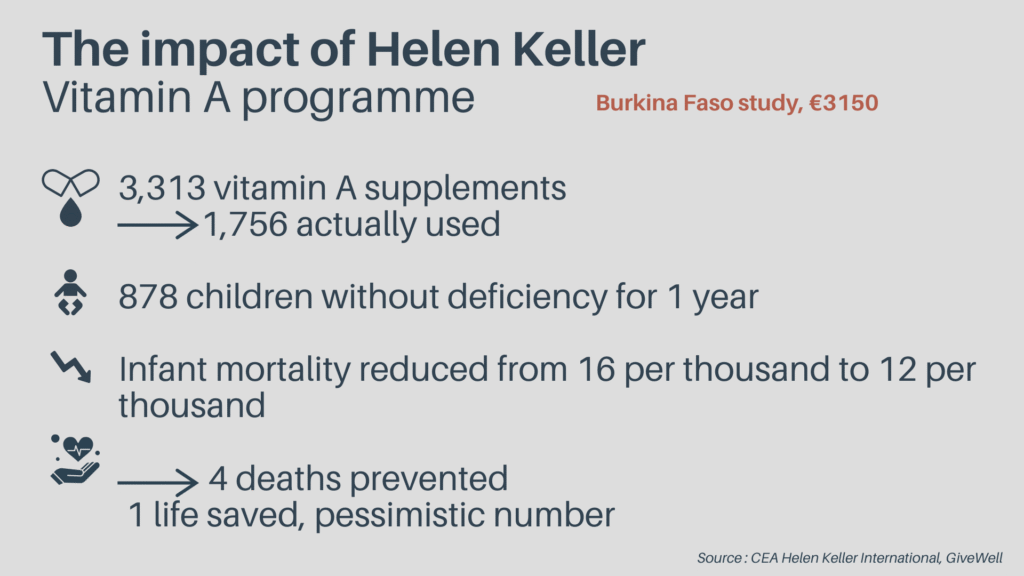

Take a look at an analysis by GiveWell, the leading international evaluator of health and poverty issues, of the cost-effectiveness of the vitamin A distribution programme implemented by Helen Keller International in Burkina Faso:

GiveWell’s analyses are among the most detailed, regularly based on randomised controlled trials, the most rigorous scientific studies allowing the highest level of confidence. They are public, can be used by sector specialists, guarantee that the final impact is high and that your support can really make a difference. However, with their 170 lines of calculations, multiple secondary excels and links to dozens of academic studies, their analysis is not accessible to the general public.

Here’s a simplified version:

This type of analysis enables us to understand the final effects generated by a given amount and therefore to compare different interventions. And the vitamin A distribution programme run by Helen Keller is one of the most effective intevrntions for improving health. The same applies to the other associations recommended for health and poverty issues on our site.

Cost-effectiveness analyses make it possible to compare different interventions by assessing the relationship between the expected impact and the resources invested. In a world where needs far outstrip available resources, choosing where and how to act is not an abstract question, but a concrete trade-off with real consequences for the lives of beneficiaries.

Thanks to cost-effectiveness analyses :

However, like all tools, these analyses have limitations and should be used with caution.

In our communications, we often make very direct and powerful statements, such as “This action is 350x more effective than that one” or “€1 protects 5.3 farm animals”. This type of wording may seem simplistic, but it fulfils an essential role: it helps people who are motivated by their impact to become aware of the differences in impact between different philanthropic actions and gives them guidelines to help them make the right choice.

We are a science communication association, and like all efforts to popularise science, we have to translate complex analyses into clear, understandable messages.

When a media outlet announces “Smoking a packet a day reduces life expectancy by 10 years”, nobody asks to see precisely the 10 years lost. Similarly, when someone says “One euro saves 5.3 animals”, this does not mean that anyone can point to the precise 5.3 animals that have been saved, but that this is the expected impact of a donation based on the best estimates available.

To enable individuals to make informed choices about where to donate, it is essential to provide them with tools for comparison. We know that the differences in impact between interventions are immense, sometimes by a factor of 10, 100 or even more. However, without quantified comparisons, it is difficult to see these differences and draw conclusions. Without these benchmarks, people risk directing their donations towards interventions that “look good” rather than those that have the greatest real impact.

Of course, this simplified communication does not reflect all the uncertainties and nuances of the analyses. But it is a necessary compromise to make the information actionable. Like all popularisation work, it involves simplifications, but these remain based on rigorous data and transparent methodologies.

People naturally tend to underestimate the impact that effective donations can have, not least because of insensitivity to scale and identifiable victim bias.

When someone hears about 10,000 lives saved, they don’t emotionally feel the difference with 100 lives saved, even though the impact is 100 times greater. Similarly, we spontaneously feel more inclined to help an identifiable person in distress than a programme that effectively saves thousands of lives. These biases often lead to donation decisions that are less effective than they could be.

That’s why we’re communicating with clear, hard-hitting figures: to give individuals a more accurate picture of the differences in impact and encourage them to act accordingly. Of course, we recognise that these figures include a margin of uncertainty, but they remain a useful approximation, infinitely more informative than a total absence of benchmarks. By using these estimates, we enable individuals to see in concrete terms the potential impact of their generosity, and help them to direct their resources where they will have the greatest effect.

We recognise that our statements are sometimes presented with more certainty than they actually have. But there’s a reason for this: if we insert too much uncertainty into our messages, it would be very difficult to promote effective generosity to the general public.

For example, if we were to say in every sentence:

“We estimate, with a significant margin of error, that under certain methodological assumptions, it is possible that a donation of €100 would enable 5 additional people to be vaccinated, although the confidence intervals vary and certain assumptions may be called into question”,

we would completely lose the mobilising and educational effect of our message.

This does not mean that we ignore these uncertainties. In fact, they are often taken into account by reducing the value put forward, and we always prefer to use the most prudent assumptions. Analyses may include margins of error, multiple reductions and sensitivity analyses, but they always consider the impact at the margin, and we seek to verify assumptions with other independent assessments.

We are committed to remaining transparent about our methodology and to publishing our full reasoning. Our figures are neither absolute truths nor empty slogans, but reasoned estimates, based on the best available data, that help us better understand where our donations can make a difference.

We take care to ensure that each figure posted on our site is accompanied by its source. If you notice that a source is missing or if you think that a figure should be revised in the light of new information, please let us know. We make a point of updating our estimates and incorporating quality sources.

For example, on issues of health and the fight against poverty, we rely on the GiveWell evaluations, which are public and fully accessible. We want you to be able to explore the rigour behind our recommendations.

While our messages are sometimes simplified in our mission to popularise, our analytical work is rigorous and nuanced. We know that cost-effectiveness analyses (CEAs) have their limits and should not be taken literally. That’s why we take a number of precautions when using them, and supplement these analyses with other tools.

AECs are often carried out using different methodologies, which can distort comparisons. Comparing an intervention evaluated by GiveWell with one evaluated by the World Bank can be misleading, as the criteria and assumptions differ.

We ensure that comparisons are made between interventions evaluated using compatible and rigorous approaches, and we clearly identify methodological differences where they exist.

The results of a cost-effectiveness analysis (CEA) are often presented in the form of precise figures, but in reality they should be interpreted as ranges of values that take account of significant uncertainty.

Without taking into account the margins of error, we run the risk of drawing the wrong conclusions. For example, according to GiveWell’s latest evaluation, donations to Helen Keller International’s vitamin A distribution programme would have 8% more positive impact than the distribution of mosquito nets by Against Malaria Foundation (AMF). If we were to look only at this apparent difference, we might be tempted to remove AMF from our recommendations on the grounds that it is less effective. This would be a mistake, as the difference is not significant given the margin of error.

If an intervention has strong positive consequences, we must also ask whether it has negative consequences, either directly or indirectly. Typically, if the government of a low-income country is running an immunisation programme, but lacks resources or stability, it may be that an association is more effective in implementing the programme, and it would seem appropriate to fund this association.

But if this is done in parallel with a functional activity of the State, it could lead the government to disengage from this project and potentially lose its capacity for action on health issues in the future. This possibility should not be ignored and alternatives should be actively sought, for example, recruiting people who know the local context and can be trained in best practice to share with the government departments in question.

Another aspect in which a naive reading of a cost-effectiveness analysis can have net negative consequences would be to weight the negative consequences as if it were a parameter like any other. Saying “to carry out this intervention, we’re going to have to lie and hurt a lot of people, but in the end it should have enormous positive consequences” is an excellent way of having a negative impact.

We live in a complex world, and trying to help others should not be done by ignoring basic universal principles such as “don’t lie, don’t hurt…” and we need to be sceptical if risks or negative consequences are part of the parameters. To take the example of vaccination again, we accept the risk of headaches for the immense benefits created by vaccines, but it took numerous studies to confirm this for each vaccine. Generally speaking, we need to be vigilant.

It is tempting to fund interventions that seem very effective, such as providing a pen to the management of the Agence Française du Développement so that it can sign international aid contracts – which seems like a valuable contribution because the signatures will help millions of people.

But given that other players were already prepared to finance this expenditure, our contribution does not provide any additional impact: we cannot take credit for the success of the lives saved by French international aid by offering a pen. In this case, the donation only frees up a few euros for the actor who would have paid, and your impact will be what they do with that sum (which will certainly have less impact).

When we evaluate an intervention, we ask ourselves whether our funding adds real value or simply replaces funding that would have existed without our contribution. If a government or major institution would have funded the action anyway, then our donation does not have the desired additional impact.

In its evaluations, GiveWell takes this into account by looking at whether an intervention would have been funded in the absence of a private donation. They examine the commitments of governments and major institutions, and analyse past grants that were almost financed by them to see whether they were eventually taken over by another player. They often find that many of the interventions considered were not funded in the end [1]. This means that additional donations can really fill a gap, by supporting interventions that would not otherwise have taken place.

So we think in marginal and counterfactual terms: instead of looking at a programme in isolation, we assess what would happen without our donation. We choose interventions where each additional euro makes it possible to achieve a change that would not otherwise have taken place. We ensure that our recommendations fill real gaps and create a tangible additional impact.

AECs are valuable, but they should not be our only compass. Other tools can be used to refine our decisions:

We explain in detail how to prioritise beyond the figures in a previous article.

At Mieux Donner, we use cost-effectiveness analyses with rigour and nuance, without falling into over-precision or oversimplification.

In our communications, we sometimes simplify the results to make them accessible and actionable.

In our analyses, we adopt a rigorous scientific approach, taking account of uncertainties and avoiding classic errors.

The aim is not to seek illusory precision, but to use the best tools available to make the best possible decisions. Our mission is to help people allocate their resources as efficiently and ethically as possible, and that means making informed use of cost-effectiveness analysis.

Finland, Iceland, Denmark lead the 2026 ranking. Full list of 147 countries, key findings on social media and wellbeing, and how your donations create happiness.

Human life is precious. It is natural to want to mobilise all our resources to save a life, even if it only prolongs a life by a week. But what happens when other people are also in danger, and our resources are not enough to help them all? As a society, we face practical limits that force us to make difficult decisions.

It’s easy to feel discouraged by the dramatic retreat of glaciers in the Alps and the scale of climate change can easily leave us feeling powerless. This article will equip you with the knowledge to take meaningful climate action, in both your personal life and through your charitable donations.

Assessing the climate impact of donations: between modelling and uncertainty At Mieux Donner, we sometimes use a calculation that is strikingly simple: “€1 = 1

When Greenpeace celebrated a court ruling against Golden Rice, scientists warned of thousands of preventable deaths. What this case reveals about evidence-based giving.

You want to donate to support biodiversity. You’re thinking about a rewilding project near you. However, in some cases, these local restoration efforts can do five times more harm to global biodiversity than good. So how can you avoid causing harm, and where will your donation have the greatest impact on the planet?