The happiest countries and findings from the World Happiness Report 2026

Finland, Iceland, Denmark lead the 2026 ranking. Full list of 147 countries, key findings on social media and wellbeing, and how your donations create happiness.

We often hear that it is impossible to measure the impact of certain actions and that there is no way of comparing their effectiveness. This is a tempting idea, as it avoids the need to make difficult trade-offs between different interventions. However, the reality is quite different: there are many ways of prioritising, even in complex contexts.

If we really want to help, we have to ask ourselves how best to do so. Ignoring variations in impact is tantamount to assuming that all interventions are the same, whereas there are considerable differences between them. Even if evaluations are imperfect and sometimes uncertain, they are infinitely more useful than no comparison at all.

In this article, we'll look at why prioritisation is essential if we are to help more. We will then explore the tools available, both quantitative and non-quantitative, to guide our decisions.

When it comes to helping, the question should not only be whether it is right to do so, but also: how to help as much as possible with the resources available? Not all actions are created equal: some interventions are transformative and easy to implement, while others require far greater resources for less impact. When we have the opportunity to act more effectively, we can decide to consider the additional people we can help.

However, our intuitions are often wrong. We tend to favour actions that are visible, immediate or emotionally striking, without really assessing their effectiveness. This leads us to invest time and money in solutions that appear to help, but which in reality may have far less impact than other alternatives.

Not measuring impact is tantamount to assuming that all interventions have the same effect. Yet studies show that some can be 100 times more effective than others in the same cause. If we ignore these differences, we risk wasting precious resources and helping less than we could.

Even if we can't always measure with absolute precision, we know that some approaches are far more effective than others. Prioritising does not mean reducing all decisions to cold numbers, but rather asking the right questions:

Our aim is not to eliminate causes or impose a single way of acting, but to provide those who give and those who take decisions with concrete benchmarks to help them make more informed choices. In the following sections, we will look at how to measure impact using the quantitative tools available, and how to supplement them with qualitative approaches when quantification reaches its limits.

Cost-effectiveness analysis is a powerful tool for identifying the most effective interventions. It consists of measuring how much an action costs for a certain benefit, for example:

By using these analyses to compare different projects, we can allocate resources to the interventions that produce the greatest effect per euro invested.

Some interventions lend themselves easily to this approach, such as distributions of mosquito nets or a vaccination programme, which can be evaluated in terms of disability-adjusted life years (DALYs) per euro given.

Scientific rigour is at the heart of this approach, thanks in particular to:

Although cost-effectiveness analysis does not capture everything, it provides valuable information for prioritising actions and avoiding funding ineffective interventions. For a deeper dive, see our article on assessing impact beyond the figures.

Many interventions seem difficult, if not impossible, to measure. Yet even in complex areas, methods exist for obtaining useful estimates. In development economics, for example, very different interventions can be compared using models based on empirical data.

Take the example of advocacy for tobacco taxation. It would be impossible to organise a large-scale randomised trial to test its effectiveness. However, by combining:

we can estimate the expected value of the number of life years gained and relate it to the advocacy budget, in order to compare its effectiveness with other public health strategies.

The key is not to confuse imprecision with the impossibility of comparison. Even when the figures are not exact, they remain essential guides for directing resources where they have the greatest impact.

Not seeking to assess the impact of an action implicitly amounts to assuming that all interventions are equal. Yet we know that some have a drastically greater impact than others. Ignoring these differences means running the risk of inefficiently spending resources that could save more lives or improve well-being even more. The aim is not to obtain perfect figures, but to use the best available data to guide our decisions in an informed way.

Areas where impact measurement is more uncertain, such as systemic reforms or public policies, should not be ignored on the grounds that they are difficult to evaluate. It is true that some interventions, such as the distribution of mosquito nets or vitamin A supplementation, are easier to quantify than others, such as the fight against corruption or the defence of human rights. That doesn't mean we shouldn't consider them: we're trying to help as best we can and shouldn't limit ourselves to what's easy to measure.

When it is difficult to quantify an impact with acceptable precision, this does not mean that it is impossible to prioritise between interventions. In many cases, it is possible to establish orders of magnitude, compare different approaches and adjust our methods in line with new evidence. Even when direct quantification is complicated, other tools exist to guide our choices.

Here is an overview of the key methods that can help guide our decisions, even when quantitative approaches are not sufficient to make choices.

When it comes to prioritising complex decisions, it's essential to recognise that each assessment tool has its own strengths, weaknesses and areas of application. Some tools are quick but less accurate, others are more comprehensive but take longer to implement. Each tool has its own trade-offs to take into account.

Some criteria to consider when evaluating a tool:

By combining several tools with complementary characteristics, we can limit assessment errors and obtain a more balanced view. For example, a cost-effectiveness analysis can be enhanced by expert feedback and factor weighting models.

Progressive iteration involves starting with a superficial analysis of a broad set of options before focusing resources on the best alternatives. Rather than trying to analyse a problem in depth straight away, the idea is to gradually refine the assessment in several stages.

Example of application:

This approach allows resources to be concentrated on the most promising interventions, while reducing the risk of ruling out a good option too early for lack of information.

When choices are complex and involve several factors, we can use systematic methods to structure our reasoning:

One of the most fundamental tools in effective decision-making is counterfactual analysis. This involves not simply assessing the apparent impact of an intervention, but asking the following question: what would have happened if this intervention had not taken place?

For example, if an organisation funds bursaries for bright students in a developing country, we need to ask what impact a bursary has on education and whether these students could have obtained a bursary by other means (government programme, other NGOs, private institutions). If this is the case, then the real impact of the programme is less than it first appears.

Counterfactual reasoning thus makes it possible to avoid overestimating the effects of an action and to identify interventions that make a real difference.

The Magnitude, Potential for Improvement and Neglectedness framework is a useful model for prioritising causes and interventions, based on three key criteria:

| Criterion | Central question |

|---|---|

| Magnitude | Does the problem affect a large number of people? To what extent are those affected impacted? |

| Potential for improvement | How much of the problem can be effectively resolved? |

| Neglectedness | How many resources are already dedicated to this problem? |

This model makes it possible to concentrate efforts on problems where an intervention can have the greatest impact. For example, a problem that is very serious but already widely addressed (cancer) might be less of a priority than an equally serious but neglected problem (vitamin A deficiency in certain developing countries).

Weighted Factor Models (WFM) are tools for integrating several criteria into a decision. Rather than evaluating an intervention according to a single indicator (cost per life saved), this model combines several dimensions to obtain a more comprehensive assessment.

Operating principle:

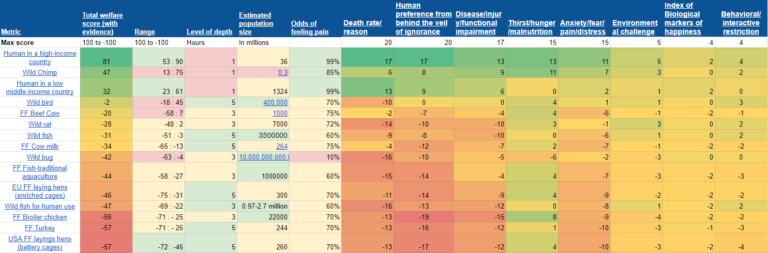

Example of a weighted factor model (Charity Entrepreneurship, 2019)

When data is limited, it is often useful to draw on the advice of specialists with in-depth knowledge of a field. However, to avoid individual and subjective bias, several methodologies can be used to structure this consultation of experts:

In cases where decisions are complex and lack precise data, these approaches help to reduce uncertainty and make informed decisions based on the best available knowledge.

A common mistake in impact assessment is to focus solely on successes. Yet analysing what didn't work helps to avoid repeating costly mistakes.

For example:

The Scared Straight programme, which aimed to reduce delinquency by exposing young people to the prison environment, not only proved ineffective but actually increased crime. The PlayPumps, water pumps activated by children playing games, were widely funded but proved to be useless and impractical for local communities. Studying these failures helps identify biases and design errors that could have been avoided, and improves future interventions.

In the face of uncertainty, one of the best strategies may be to invest in gathering information before taking large-scale action. Rather than massively funding an intervention with uncertain effects, it may be more effective to start with targeted experiments:

This approach helps to limit risk while maximising learning, so that future decisions can be made in a more informed way.

Many of the debates surrounding impact assessment stem from a misunderstanding: just because we want to prioritise more effectively does not mean we want to quantify everything. These tools show that it is possible to prioritise even in the absence of precise figures. Contrary to the image of a cold, purely numerical utilitarianism, prioritisation can and must incorporate qualitative and pragmatic methods.

The aim is not to have artificial precision, but to avoid total arbitrariness and the illusion that all actions are equal.

It's easy to criticise quantification by reducing it to an obsession with numbers. But in reality, the best prioritisation processes combine quantitative and qualitative approaches to make more informed decisions.

Even in complex areas, there are tools available to assess and compare the impact of interventions. Rather than giving up in the face of uncertainty, we need to use the best available methods to guide our decisions. Measuring impact should not be a bureaucratic exercise: it is a powerful tool for doing better and helping more.

When it comes to helping people as effectively as possible, a frequent criticism targets the quantitative approach: some people see the use of numerical indicators as a form of reductionism to the detriment of human realities. Yet impact decisions are essential if we are to meet the needs of those who suffer most.

Rather than falling into a naive utilitarianism that relies solely on raw figures, we have at our disposal qualitative and conceptual approaches that allow us to integrate the complexity of social and humanitarian interventions, and to give with real impact.

We analyse the best charities using the same prioritisation principles described in this article.

Make a high-impact donation

Finland, Iceland, Denmark lead the 2026 ranking. Full list of 147 countries, key findings on social media and wellbeing, and how your donations create happiness.

Human life is precious. It is natural to want to mobilise all our resources to save a life, even if it only prolongs a life by a week. But what happens when other people are also in danger, and our resources are not enough to help them all? As a society, we face practical limits that force us to make difficult decisions.

It’s easy to feel discouraged by the dramatic retreat of glaciers in the Alps and the scale of climate change can easily leave us feeling powerless. This article will equip you with the knowledge to take meaningful climate action, in both your personal life and through your charitable donations.

€8,920 collected through Mieux Donner, €746,000 in total with Effektiv Spenden: here is how the Safeguarding the Future Fund allocated its H2 2025 donations across three projects in nuclear security and AI safety.

The Smart Buys Alliance brings together government agencies, research centres and philanthropic organisations to produce and disseminate lists of the most cost-effective development interventions. An overview of the initiative, its methodology and its key areas of focus.

Is deciding from London or Paris who needs help and how a form of colonialism? This article examines what the critique gets right, where it goes wrong, and what effective giving does concretely differently — including its own blind spots.